Embodied Intelligence

Our Embodied Intelligence research focuses on how intelligence emerges from the interaction between an agent's physical body and its control systems. We develop AI methods that leverage this physical embodiment to enhance adaptability in changing environments, creating more robust and responsive autonomous systems.

Embodied Intelligence Demos

To make our embodied intelligence research more visible, we highlight two recent robot demos here, covering tabletop manipulation, object interaction, and task execution on a real platform.

Tabletop Grasping and Block Manipulation

Demonstrates target perception, grasp execution, and basic manipulation ability in a structured tabletop setting.

Container Perception and Interaction

Highlights visual understanding, action planning, and multi-step interaction with everyday objects.

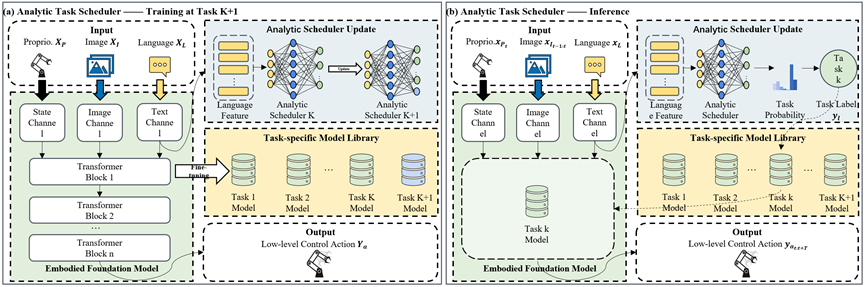

In this work, we propose the Analytic Task Scheduler (ATS), a novel framework for continual learning in embodied foundation models. ATS consists of a task-specific model library, where each model is fine-tuned independently on a single task, and an analytic scheduler trained using recursive least squares (RLS) to learn the mapping between language instructions and task-specific models. We validate ATS on a real-world robot platform (RM65B), demonstrating superior resistance to forgetting and strong adaptability to task variations.

We use multi-agent systems for environmental perception in embodied grasping systems, complex task decomposition, and whole-process rethinking, where each agent utilizes a shared memory bank for token prediction. To align with human intent, we developed a dual-agent loop that improves the grasping success rate by 25% compared to SOTA, and we embedded each agent's token output into a latent space for collaborative optimization.